What is biohacking – the latest wellness trend taking over TikTok?

Biohacking is the latest wellness trend taking the internet by storm, with TikTok witnessing a staggering 316 per cent increase in searches. More notably, Bryan Johnson, the founder of KernelCo and Blueprint, has contributed to the intrigue after investing approximately $2 million per year to reduce his biological age. The 46-year-old tech tycoon recently made headlines for tracking his nighttime erections, in an attempt to reach the level of an 18-year-old. How exactly does he do that, you may ask? Well... He gives himself electric shocks in his private area. Johnson told Steven Bartlett on The Diary of a CEO podcast that nighttime erections "are actually a meaningful health indicator" because they "represent psychological health, cardiological health." While that's one extreme measure of biohacking, there are other methods behind the trend. A spokesperson for Snusboss said: "Biohacking refers to the practice of making changes to one’s biology, typically through self-experimentation and the use of technology, to enhance physical and cognitive abilities, optimize health, and achieve personal goals." “Currently #biohacking has 791 million views on TikTok, and continues to gain popularity, particularly with Millennials and Generation Z who are becoming more interested in the scientific research behind their health and wellbeing and are also open to experimenting with new techniques," he continued. Biohacking is essentially an unconventional experimental biotechnology that is believed to help improve overwell wellbeing. Here are several ways people are implementing into their lives: Ice cold plunge "Cold plunging is an aspect of cold-water therapy or cold-water immersion, which involves immersing oneself in cold water temperatures. "It is recommended to complete 11 minutes of cold-water exposure per week, which can be broken into three minutes per session. "Studies suggest 50 to 59 degrees Fahrenheit (10 to 15 degrees Celsius) to be an optimal temperature range for cold plunges focused on reducing muscle soreness. "Doing this will also help to reduce inflammation, improve circulation, and enhance recovery after exercise. It is also shown to boost the immune system, improve sleep quality and help with stress management." Optimise your sleep "If you are getting around seven to nine hours of sleep a night, you will encourage muscle growth and repair, help keep your brain alert, improve your blood sugar levels and even enhance your lifespan. "Whilst there are several tips on social media such as eating certain fruits before bed, avoiding electronic devices and avoiding alcohol, one of the most important rules of optimizing sleep is maintaining a good circadian rhythm. "This means going to bed and waking up at the same time every day, even on weekends. To do so, try maintaining a routine and try to spend time outdoors during daylight, especially in the morning. This is because natural light exposure helps regulate your body's internal clock and promotes alertness during the day. "To measure progress, you can use devices such as smartwatches that track sleep duration and quality." Regular saunas "Saunas, small rooms heated with hot air or steam, are said to have cardiovascular health benefits. "When exposed to high temperatures, the body then works to cool itself down by increasing heart rate, blood flow, and cardiac output. This is known to decrease blood pressure, leading to benefits for cardiovascular health and longevity. "For best results from this biohack, choose a temperature between 175-195F (80-90C) with 10-20 per cent humidity for 30 minutes at least three times a week." Himalayan salt in water "Electrolyte levels are important for the body to function properly. They help to balance the amount of water in your body, balance your acid/base (pH) levels and move nutrients into your cells. "Your body makes electrolytes naturally, as well as obtaining them from food, drinks and supplements. "However, if your levels drop, mineral-rich Himalayan salt contains lots of electrolytes and is proven to help detox the body, supporting kidney and liver functions. "Therefore, around one teaspoon of Himalayan salt added to one litre of water is recommended per day. "Not only will it keep you feeling energised, but also help to boost your metabolism." Moderate coffee intake "Low to moderate doses of caffeine (50–300 mg) are scientifically proven to cause increased alertness, energy, and ability to concentrate. "Science also suggests drinking two cups of coffee a day could help ward off heart failure when a weakened heart has difficulty pumping enough blood to the body. "Both regular and decaf coffee have a protective effect on the liver. Research shows that coffee drinkers are more likely to have liver enzyme levels within a healthy range than people who don’t drink coffee. "Experts say it is healthy to drink a maximum of 2.5 cups of coffee per day." Breathwork "We breathe every single day, but we often don’t even think about how we are breathing. "In times of stress, our breath automatically responds by shortening and speeding up and this can cause further strain on the body. "With breathwork practice, the body can be trained to automatically control breathing and utilize it as a calming tool during times of stress. "Breathing also directly affects how much oxygen our cells are getting, so when we deepen and slow down the breath from its usual pattern, we allow more oxygen to enter each cell. "To practice breathwork, inhale for 4 seconds and exhale for 6 seconds. Repeat this for around 10 minutes per session. For best results, do this once in the morning and once in the evening." Red Light Therapy (RLT) "Red light therapy (RLT) is a popular method used to optimize overall skin health. RLT also helps to boost muscle recovery, reduce pain and inflammation, support nervous system health, and generally increase energy levels. "For those who experience inflammation and pain with Achilles tendinitis, and have signs of skin ageing and skin damage, research shows RLT may smooth your skin and help with wrinkles. RLT is also known to help with acne scars, burns, and signs of UV sun damage. "To complete the treatment, lie in a full-body LED red light bed or pod or be treated by a professional with a device that's outfitted with panels of red lights. "Professionals recommend trying red light therapy three times per week for 10 minutes each time for a minimum of one month." How to join the indy100's free WhatsApp channel Sign up for our free Indy100 weekly newsletter Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

2023-11-22 20:19

Will Pokimane accept Kick deal? Ed Craven continues to pursue pro streamer despite her controversial 'morals and ethics' statement

Despite Pokimane's firm refusal to sign with Kick, even for a $100M deal due to moral concerns, the Kick co-founder remains optimistic

2023-06-27 20:25

New study shows that early humans deliberately made stones in spheres

A study of 150 stones dating back 1.4m years shows early humans were deliberately crafting spherical shapes – but nobody knows why. The Hebrew University of Jerusalem made findings after analysing the limestone balls which were unearthed in Ubeidiya, a dig site in Israel’s Jordan Rift Valley. Scientists have previously speculated that the stones, which were discovered in the 1960s and serve no discernable purpose, became round after being used as hammers. But the university’s team reconstructed the steps required to create the so-called spheroids and found they were part of a “preconceived goal to make a sphere”. The researchers used 3D analysis to retrace how they were made based on the markings and geometry of the spheroids. They concluded that the objects were intentionally “knapped”, the technique used to shape stone by hitting it with other objects. Antoine Muller, a researcher at the university’s Institute of Archaeology, said: “The main significance of the findings is that these spheroids from ‘Ubeidiya appear to be intentionally made, with the goal of achieving a sphere. “This suggests an appreciation of geometry and symmetry by hominins 1.4 million years ago.” Early humans clearly had some reason for making the balls, but what exactly that is remains a mystery. He said: “We still can’t be confident about what they were used for. A lot of work needs to be done to narrow down their functionality.” Sign up to our free Indy100 weekly newsletter Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

2023-09-09 00:29

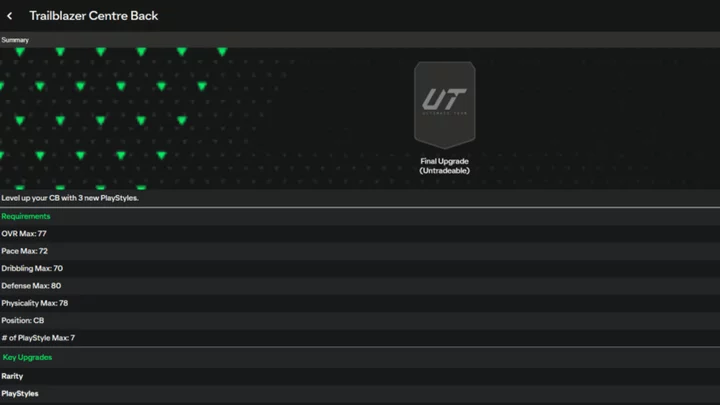

FC 24 Trailblazer Center Back Evolution: How to Complete, Best Players to Use

FC 24 Trailblazer Center Back Evolution program details including how to complete each level, the best players to use, the full list of player rewards and more.

2023-10-26 02:18

It’s Grilling Season, Which Means It’s Also Clean-Your-Grill Season—Here Are the Best Ways To Do It

Charred gunk on your grill can inhibit performance and lead to a bummer of a burger. Here’s how to fix it.

2023-05-31 06:29

Janelle Brown's daughter Maddie praised as she bonds with Savanah: 'She's welcoming to all her siblings'

'It's really cool that she not only makes time but space for them in her home,' a fan said, praising Maddie

2023-06-08 09:28

CesiumAstro Expands Advisory Board with Appointment of the Honorable William “Mac” Thornberry as Strategic Advisor

AUSTIN, Texas--(BUSINESS WIRE)--May 9, 2023--

2023-05-09 19:55

An ESG Loophole Helps Drive Billions into Gulf Fossil Fuel Giants

Saudi Aramco, the world’s largest oil company, has become an unlikely beneficiary of funds earmarked for sustainable investments

2023-07-11 12:18

Study explains how masturbation helped the evolution of humanity

Masturbation is far more important in the timeline of human evolution than ever previously thought. In fact, we might not be here at all if it weren’t for primates masturbating thousands of years ago, a new study has claimed. New research from the Proceedings of the Royal Society B has focused on the effects of masturbating in male primates and its effects on ensuring reproductive methods. “Masturbation is common across the animal kingdom but is especially prevalent amongst primates, including humans,” the study authors said in a statement. Sign up to our free Indy100 weekly newsletter They went on to say that masturbation “was most likely present in the common ancestor of all monkeys and apes” before saying that it might have influenced mating behaviour. “Masturbation (without ejaculation) can increase arousal before sex,” the authors wrote. “This may be a particularly useful tactic for low-ranking males likely to be interrupted during copulation, by helping them to ejaculate faster.” According to the researchers, regular ejaculation evolved as a trait among male primates where they faced competition. That’s because it “allows males to shed inferior semen, leaving fresh, high-quality sperm available for mating, which are more likely to outcompete those of other males.” It also helped male primates “by cleansing the urethra (a primary site of infection for many STIs) with ejaculate”. Things were less clear with female primates, with the study authors stating that “more data on female sexual behavior are needed to better understand the evolutionary role of female masturbation.” “Our findings help shed light on a very common, but little understood, sexual behavior,” said lead author Dr. Matilda Brindle, of University College London. “The fact that autosexual behavior may serve an adaptive function, is ubiquitous throughout the primate order, and is practiced by captive and wild-living members of both sexes, demonstrates that masturbation is part of a repertoire of healthy sexual behaviors.” Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

2023-06-07 20:28

When Can I Pre-Load Final Fantasy 16?

Players can pre-load Final Fantasy 16 on June 19, three days before the game releases.

2023-06-16 06:45

Google leaks its own Pixel 8 Pro because that's what Google does

Sometimes it seems like it has to be on purpose. Google, a company known for

2023-08-30 17:16

5 Fascinating Conlangs You Can Learn

A conlang is a constructed language, where someone has intentionally created its grammar, vocabulary, and phonology. Here are five you can learn.

2023-08-10 20:23

You Might Like...

Lies of P Pre-Load Times

Argyle Grows Number of Consumer Verifications 100% YoY, Welcomes 35+ New Customers Across Mortgage, Lending, and Banking Industries

Quectel BC660K-GL module achieves certification for leading Australian and New Zealand networks

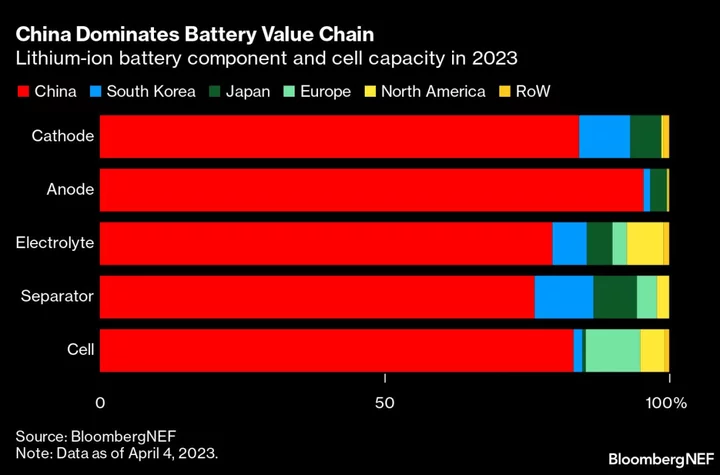

Cummins, Daimler and Paccar Partner on $3 Billion Battery Plant for Electric Trucks

Zimbabwe Exchange to List Carbon Credits as State Upends Trade

Top 5 video games that trolled famed YouTubers PewDiePie, Markiplier and more

Sam’s Club MAP Closes the Loop for Advertisers with Media and Sales Performance Dashboard

Instagram down for more than 98,000 users - Downdetector