FIFA 23 Super Lig TOTS Upgrade SBC: How to Complete

FIFA 23 Super Lig TOTS Upgrade SBC is now available. Here's how to complete the SBC and if it's worth it.

2023-05-31 01:27

Amazon Fire TV Cube (2022) Review

Editors' Note: This is the most recent version of the Amazon Fire TV Cube. Read

2023-06-23 00:15

Coinbase launches nonprofit crypto advocacy group

Coinbase is launching an independent nonprofit organization for advancing pro-crypto legislation through Congress, hoping to build on recent legislative and legal wins for the digital asset industry.

2023-08-15 00:17

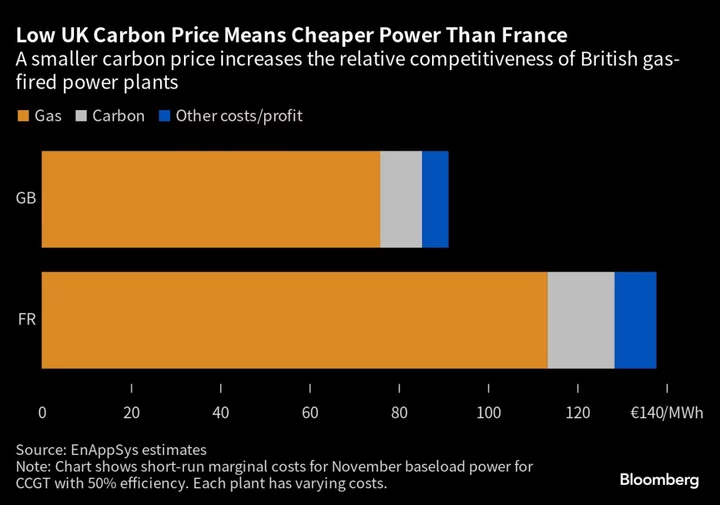

UK to Boost Power Exports This Winter After Carbon Prices Plunge

The UK will increase power sales to the continent this winter as a slump in carbon prices makes

2023-09-19 13:58

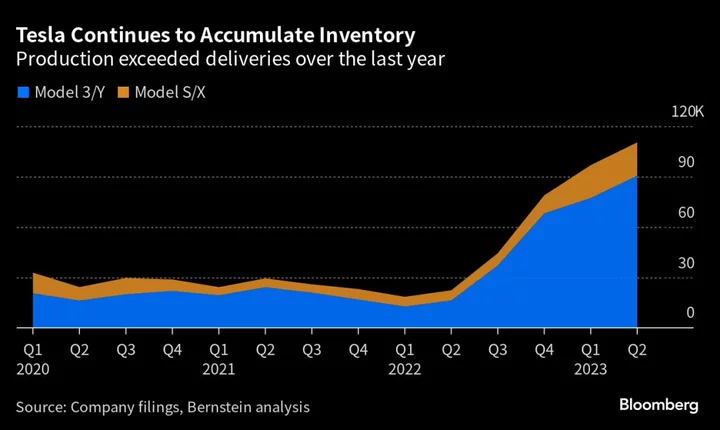

Tesla Tests the Limits of Elon Musk’s Minimal Model Strategy

There was a lot to like in Tesla Inc.’s latest quarterly numbers. The carmaker delivered roughly 18,000 more

2023-07-05 17:55

FTC hits Amazon with $25 million fine for violating child privacy with Alexa voice assistant

Amazon has agreed to pay a $25 million civil penalty to settle Federal Trade Commission allegations it violated a child privacy law and deceived parents by keeping for years kids’ voice and location data recorded by its Alexa voice assistant

2023-06-01 12:15

‘Billions’ of Intel computers potentially affect by huge security vulnerability

A major security vulnerability had the potential to hit “billions” of computers, according to the Google researchers who discovered it. The security flaw, dubbed “Downfall”, attacked Intel processors in a way that would allow hackers to steal passwords, encryption keys and private data from users. That’s according to Daniel Moghimi, the senior research scientist at Google who found the problem and disclosed it this week. He alerted Intel about the issue with its chips, and the company has since sent out an update to fix it. But the issue could have affected “billions of personal and cloud computers”, Google said. “Had these vulnerabilities not been discovered by Google researchers, and instead by adversaries, they would have enabled attackers to compromise Internet users,” the researchers wrote in a blog post. The attack worked by breaking through the boundary that is intended to keep software safe from attacks on the hardware. In doing so, attackers would have been able to find data that belongs to other users on the system, the attackers said. It did so by exploiting technologies that are intended to speed up various processes on the chip. Attackers were able to exploit those tools to steal sensitive information that should have stayed available only to its owner, when they were signed in. The nature of the attack means that hackers would need to be on the same physical processor as the person they are attacking. But that would be possible using malware, or the shared computing model that powers cloud computing, for instance. Intel said that the problem does not affect recent versions of its chips, and that the fix does not cause major problems. But it did suggest that users could disable the fix, if they thought the risk was not worth the slight drawbacks in performance. The company also told Bleeping Computer that “trying to exploit this outside of a controlled lab environment would be a complex undertaking”. Read More AI breakthrough could dramatically reduce planes’ global warming impact Earth hit by powerful ‘X-1’ solar flare, after fears of ‘cannibal’ blast Even Zoom wants staff to ‘come back to the office’

2023-08-10 00:48

Musk Calls for AI ‘Regulatory Structure,’ Warns Congress of Risk

Elon Musk called for a “regulatory structure” for artificial intelligence after warning US senators about risks to civilization

2023-09-14 05:24

Amazon's devices chief David Limp to retire after 13 years

Amazon.com's devices chief David Limp would retire in the coming months, in a high-level departure from a division

2023-08-15 05:50

Scientists warn of threat to internet from AI-trained AIs

Future generations of artificial intelligence chatbots trained using data from other AIs could lead to a downward spiral of gibberish on the internet, a new study has found. Large language models (LLMs) such as ChatGPT have taken off on the internet, with many users adopting the technology to produce a whole new ecosystem of AI-generated texts and images. But using the output data from such AI systems to further train subsequent generations of AI models could result in “irreversible defects” and junk content, according to a new, yet-to-be peer-reviewed study. AI models like ChatGPT are trained using vast amounts of data pulled across internet platforms that have mostly remained human generated until now. But AI-generated data using such models have a growing presence on the internet. Researchers, including those from the University of Oxford in the UK, attempted to understand what happened when several subsequent generations of AIs are trained off each other. They found the widespread use of LLMs to publish content on the internet on a large scale “will pollute the collection of data to train them” and lead to “model collapse”. “We discover that learning from data produced by other models causes model collapse – a degenerative process whereby, over time, models forget the true underlying data distribution,” scientists wrote in the study, posted as a preprint in arXiv. The new findings suggested there to be a “first mover advantage” when it comes to training LLMs. Scientists liken this change to what happens when AI models are trained on music created by human composers and played by human musicians. The subsequent AI output then trains other models, leading to a diminishing quality of music. With subsequent generations of AI models likely to encounter poorer quality data at their source, they may start misinterpreting information by inserting false information in a process scientists call “data poisoning”. They warned that the scale at which data poisoning can happen drastically changes after the advent of LLMs. Just a few iterations of data can lead to major degradation, even when the original data is preserved, scientists said. And over time, this could lead to mistakes compounding and forcing models that learn from generated data to misunderstand reality. “This in turn causes the model to misperceive the underlying learning task,” researchers said. Scientists cautioned that steps must be taken to label AI-generated content from human-generated ones, along with efforts to preserve original human-made data for future AI training. “To make sure that learning is sustained over a long time period, one needs to make sure that access to the original data source is preserved and that additional data not generated by LLMs remain available over time,” they wrote in the study. “Otherwise, it may become increasingly difficult to train newer versions of LLMs without access to data that was crawled from the Internet prior to the mass adoption of the technology, or direct access to data generated by humans at scale.” Read More ChatGPT ‘grandma exploit’ gives users free keys for Windows 11 Protect personal data when introducing AI, privacy watchdog warns businesses How Europe is leading the world in the push to regulate AI ‘Miracle material’ solar panels to finally enter production Meta reveals new AI that is too powerful to release Reddit user’s protests against the site’s rules have taken an even more bizarre turn

2023-06-20 13:57

Bungie won't abandon Destiny 2 with the arrival of Marathon

Bungie has vowed to keep supporting 'Destiny 2' when 'Marathon' is here.

2023-07-03 19:28

Arm set to target IPO valuation of $50 billion-$55 billion-sources

By Echo Wang NEW YORK Arm Holdings Ltd is targeting a valuation between $50 billion to $55 billion

2023-09-02 05:56

You Might Like...

Amazon Smart Plug Review

Globe Group Revolutionizes Healthcare Access in the Philippines With Launch of Groundbreaking KonsultaMD SuperApp

Tim Scott pushes back on DeSantis over Florida curriculum: 'No silver lining' in slavery

Panos Panay quits Microsoft. Is the upcoming Surface event in trouble?

FEELM and RELX international Launch First Whole Chain Recycling Scheme for Disposable Vapes in the UK

Singapore Defends Climate Commitment After Kempen ESG Exclusion

Fortnite Optimus Prime Battle Pass Skin Revealed

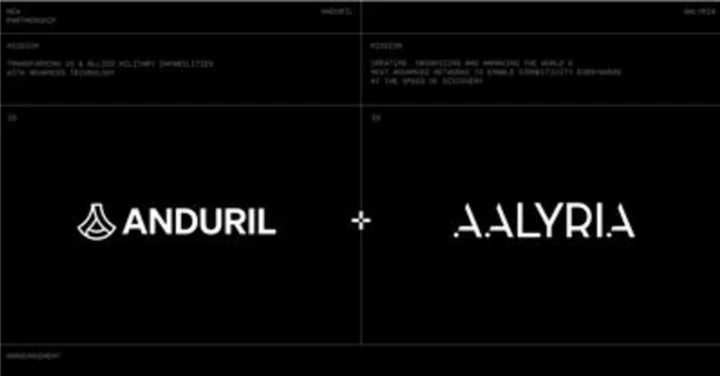

Aalyria & Anduril Partner to Integrate Technologies to Enhance Battlefield Capabilities